Archive

Unlocking the Mystery behind the OpenDJ User Database

One question that arises time and time again pertains to the manner in which OpenDJ stores it entry data and how this differs from the Oracle Directory Server Enterprise Edition (previously known as Sun Directory Server Enterprise Edition).

The following information is from ForgeRock’s OpenDJ Administration, Maintenance and Tuning Class and has been used with the permission of ForgeRock.

OpenDJ includes the Berkeley DB Java Edition database as the backend repository for user data. The Java version is quite different from the Berkeley C version which is used by the Sun Directory Server Enterprise Edition.

The Berkeley DB Java Edition is a Java implementation of a raw database using the B-Tree technology. A Berkeley DB JE environment can be composed of multiple databases, each of which is stored in a single folder on the file system. Rather than having separate files for records and transaction logs, Berkeley DB JE uses a rolling log file to store everything; this includes the B-Tree structure, the user provided records and the indexes. Write operations append entries as the last items in the log file. When a certain size is reached (10MB by default), a new log file is created. This results in consistent write performance regardless of the database size.

Note: Initial log files are located beneath the db/userRoot folder in the installation directory. The initial log file is 00000000.jdb. When that file reaches a size of 10MB, a new file is created as 00000001.jdb.

Over time records are deleted or modified in the log. OpenDJ performs periodic cleanup of log files and rewrites them to new log files. This task is performed without action by a system administrator and ensures consistency of the data contained in the log files.

You can see a list of all entries contained in the database with the dbtest utility. This command returns the entry specific information that can also be used to debug the backend.

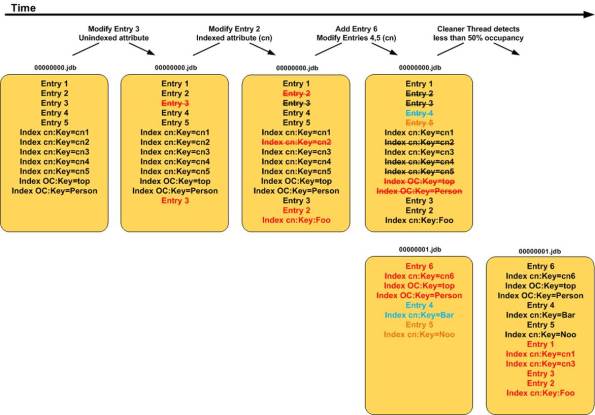

The following diagram demonstrates the periodic processing of the Berkeley DB Java Edition database over time.

Log (Entry) Processing

The log shown at the far left (row 1, column 1) contains entries after an immediate population. You will note that it contains five data entries (Entry 1 through Entry 5) as well as the associated index entries. To keep things simple, only the common name and object class attributes have been indexed (as shown by the cn and OC keys).

Note: The data does not appear in this actual format. This notation is used for demonstration purposes.

As time goes by, data in the database changes. New entries are added, attribute values for existing entries are modified, and some entries are deleted. This has an effect on both the data entries in the log as well as any associated index entries.

The second log (row 1, column 2) demonstrates the effect on the database after Entry 3 has been modified. The modified data entry is written to the end of the log and the original entry is marked for deletion. The modification was made to an attribute that that was not indexed so the log does not contain any modification to the index entries.

Changes made to data entries containing indexed attributes would not only appear at the end of the log, but the modifications to the indexes would appear there as well. This can be seen in the third log (row 1, column 3). Entry 2 was modified and the change involved a modification to the common name (cn) attribute. Note how the previous index value for this entry is marked for deletion and the new index entry is written to the end of the log.

The logs found at row 1, column 4 and row 2, column 4 demonstrate how new log files are created as previous ones reach their limit. Write operations are appended to the log file in a linear fashion until it reaches a maximum size of 10 MB at which time a new log is created. This can be seen by the 00000001.jdb log in row 2, column 4.

Note: The maximum log size of 10 MB is defined in the ds-cfg-db-log-file-max attribute contained in the backend definition.

Database Cleanup

The Berkeley C Database simply purged data from the database. This led to fragmentation (“holes”) in the database and required periodic cleanup by system administrators to eliminate the holes (similar to defragmenting your hard drive). This is not the case with the Berkeley DB Java Edition.

The Berkeley DB Java Edition has a number of threads that periodically check the occupancy of each log. If it detects that the size associated with active entries falls below a certain threshold (50% of its maximum size, or 5MB by default), it rewrites the active records to the end of the latest log file and deletes the old log altogether. This can be seen in the log found at row 2, column 5.

Note: Maximum occupancy is defined in the ds-cfg-db-cleaner-min-utilization attribute contained in the backend definition.

The default occupancy is 50% so at its maximum size, the log will be twice as big as its sum of records. Increasing the occupancy % will reduce the log’s size, but induce more copying, thus increasing CPU utilization.

This process of always appending data to the end of the log and periodically rewriting the log as entries are obsoleted allows OpenDJ to maintain a fairly consistent size – even if entries are heavily modified. It does, however, allow the database to shrink in size if many entries are deleted.

Check out ForgeRock’s website for more information on OpenDJ or click here if you are interested in attending one of ForgeRock’s upcoming training classes.

The Most Complete History of Directory Services You Will Ever Find

Directory Services Timeline

The Most Complete History of Directory Services You Will Ever Find

(Until the next one comes along)

| Date | Event |

Source |

| 1969 | First Arpanet node comes online; first RFC published. | |

| 1973 | Ethernet invented by Xerox PARC researchers. | |

| 1982 | TCP/IP replaces older Arpanet protocols on the Internet. | |

| 1982 | First distributed computing research paper on Grapevine published by Xerox PARC researchers. | |

| 1984 | Internet DNS comes online. | |

| 1986 | IETF formally chartered. | |

| 1989 | Quipu (X.500 software package) released. | |

| 1990 | Estimated number of Internet hosts exceeds 250,000. | |

| 1990 | First version of the X.500 standard published. | |

| 1991 | A team at CERN headed by Tim Berners-Lee releases the first World Wide Web software. | |

| 1992 | University of Michigan developers release the first LDAP software. | |

| 1993 | NDS debuts in Netware 4.0. | |

| July 1993 | LDAP specification first published as RFC 1487. | |

| December 1995 | First standalone LDAP server (SLAPD) ships as part of U-M LDAP 3.2 release. | |

| April 1996 | Consortium of more than 40 leading software vendors endorses LDAP as the Internet directory service protocol of choice. | |

| 1996 | Netscape Hires Tim Howes, Mark Smith, and Gordon Good from University of Michigan. Howes serves as a directory server architect. | |

| September 1997 | Sun Microsystems releases Sun Directory Services 1.0, derived from U-M LDAP 3.2 |

3 |

| November 1997 | LDAPv3 named the winner of the PC Magazine Award for Technical Excellence. | |

| December 1997 | LDAPv3 approved as a proposed Internet Standard. | |

| 1998 | The OpenLDAP Project was started by Kurt Zeilenga. The project started by cloning the LDAP reference source from the University Of Michigan. | |

| January 1998 | Netscape ships the first commercial LDAPv3 directory server. | |

| March 1998 | Innosoft acquires Mark Walh’s Critical Angle company, relesases LDAP directory server product 4.1 one month later. | |

| July 1998 | Sun Microsystems ships Sun Directory Server 3.1, implementing LDAPv3 standards |

3 |

| July 1998 | Estimated number of Internet hosts exceeds 36 million. | |

| 1999 | AOL acquires Netscape and forms the iPlanet Alliance with Sun Microsystems. | |

| March 1999 | Innosoft team, led by Mark Wahl, releases Innosoft Distributed Directory Server 5.0 |

3 |

| March 2000 | Sun Microsystems acquires Innosoft, merges Innosoft directory code with iPlanet. This forms the foundation for the iPlanet Directory Access Router. |

3 |

| October 2001 | The iPlanet Alliance ends and Sun and Netscape fork the codebase. | |

| October 2004 | Apache Directory Server Top Level Project is formed after 1 year in incubation |

3 |

| December 2004 | RedHat Purchases Netscape Server products | |

| 2005 | Sun Microsystems initiates the OpenDS project. An open source directory server based on the Java platform. | |

| June 2005 | RedHat Releases Fedora Directory Server | |

| October 2006 | Apache Directory Server 1.0 is released |

3 |

| 2007 | UnboundID releases its directory server |

12 |

| 2008 | AOL Stops Supporting Netscape Products | |

| April 2009 | Oracle purchases Sun Microsystems | |

| May 2009 | RedHat changes the Fedora Directory Server to 389 Directory Server | |

| Feb 1, 2010 | ForgeRock is founded |

3 |

| Dec 2010 | ForgeRock releases OpenDJ | |

| July 2011 | Oracle releases Oracle Unified Directory |

Sources:

(1) Understanding and Deploying LDAP Directory Services; Second Edition; Timothy A. Howes, Ph.D., Mark C. Smith, and Gordon S. Good.

(2) 389 Directory Server; History (http://directory.fedoraproject.org/wiki/History).

(3) Email exchange with Ludovic Poitou (ForgeRock).

(4) Press Release, March 16th, 1998; “Innosoft Acquires LDAP Technology Leader Critical Angle Inc. (http://www.pmdf.process.com/press/critical-angle-acquire.html).

(5) OpenLDAP; Wikipedia (http://en.wikipedia.org/wiki/OpenLDAP).

(6) iPlanet; Wikipedia (http://en.wikipedia.org/wiki/IPlanet).

(7) OpenDS; Wikipedia (http://en.wikipedia.org/wiki/OpenDS).

(8) Netscape; Wikipedia (http://en.wikipedia.org/wiki/Netscape).

(9) Press Release, April 20th, 2000; “Oracle Buys Sun” (http://www.oracle.com/us/corporate/press/018363).

(10) 389 Directory Server; 389 Change FAQ (http://directory.fedoraproject.org/wiki/389_Change_FAQ).

(11) OpenDJ; Wikipedia (http://en.wikipedia.org/wiki/OpenDJ).

(12) Email exchange with Nick Crown (UnboundID).

(13) Press Release, July 20th, 2011; “Oracle Announces Oracle Unified Directory 11g” (http://www.oracle.com/us/corporate/press/434211).

Single Sign-On Explained

So what is SSO and why do I care?

SSO is an acronym for “Single Sign-On”. There are various forms of single sign-on with the most common being Enterprise Single Sign-On (ESSO) and Web Single Sign-On (WSSO).

Each method utilizes different technologies to reduce the number of times a user has to enter their username/password in order to gain access to protected resources.

Note: There are various offshoots from WSSO implementations – most notably utilizing proxies or portal servers to act as a central point of authentication and authorization.

Enterprise Single Sign-On

In ESSO deployments, software typically resides on the user’s desktop; the desktop is most commonly Microsoft. The software detects when a user launches an application that contains the username and password fields. The software “grabs” a previously saved username/password from either a local file or remote storage (i.e. a special entry in Active Directory), enters these values into the username and password fields on behalf of the application, and submits the form on behalf of the user. This process is followed for every new application that is launched that contains a username and password field. It can be used for fat clients (i.e. Microsoft Outlook), thin clients (i.e. Citrix), or Web-based applications (i.e. Web Forms) and in most cases the applications themselves are not even aware that the organization has implemented an ESSO solution. There are definite advantages to implementing an ESSO solution in terms of flexibility. The drawback to ESSO solutions, however, is that software needs to be distributed, installed, and maintained on each desktop where applications are launched. Additionally, because the software resides on the desktop, there is no central location in which to determine if the user is allowed access to the application (authorization or AuthZ). As such, each application must maintain its own set of security policies.

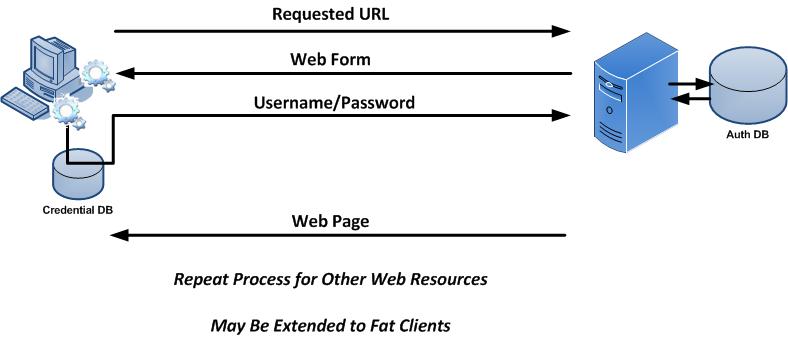

The following diagram provides an overview of the steps performed in ESSO environments.

A user launches an application on their desktop. An agent running in the background detects a login screen from a previously defined template. If this is the first time the user has attempted to access this application, they are prompted to provide their credentials. Once a successful login has been performed, the credentials are stored in a credentials database. This database can be a locally encrypted database or a remote server (such as Active Directory). Subsequent login attempts do not prompt the user for their credentials. Instead, the data is simply retrieved from the credentials database and submitted on behalf of the user.

Container-Based Single Sign-On

Session information (such as authenticated credentials) can be shared between Web applications deployed to the same application server. This is single sign-on in its most basic and limited fashion as it can only be used across applications in the same container.

The following diagram provides a high level overview of the steps performed in container-based single sign-on environments.

A user accesses a Web application through a standard Web browser. They are prompted for their credentials which can be basic (such as username and password) or can utilize other forms of authentication (such as multi-factor, X.509 certificates, or biometric). Once the user has authenticated to the application server, they are able to access other applications installed in the same J2EE container without having to re-authenticate (that is, if the other applications have been configured to permit this).

Traditional Web Single Sign-On

In contrast, WSSO deployments only apply to the Web environment and Web-based applications. They do not work with fat clients or thin clients. Software is not installed on the user’s desktop, but instead resides centrally within the Web container or J2EE container of the Web application being protected. The software is often times called a “policy agent” and its purpose is to manage both authentication and authorization tasks.

The following diagram provides a high level overview of traditional Web Single Sign-On.

A user first attempts to access a Web resource (such as ADP) through a Web browser. They are not authenticated to the domain so they are directed to the central authentication server where they provide their credentials. Once validated, they receive a cookie indicating that they are authenticated to the domain. They are then redirected back to the original Web resource where they present the cookie. The Web resource consults the authentication server to determine if the cookie is valid and that the session is still active. They also determine if this user is allowed access to the Web resource. If so, they are granted access. If the user were to attempt to access another Web resource in the same domain (i.e. Oracle eBusiness Suite), they would present the cookie as proof that they are authenticated to the domain. The Web resource consults the authentication server to determine the validity of the cookie, session, and access rights. This process continues for any server in the domain that is protected by WSSO.

Portal or Proxy-Based Single Sign-On

Portal and proxy-based single sign-on solutions are similar to Standard Web Single Sign-On except that all traffic is directed through the central server.

Portal Based Single Sign-On

Target-based policy agents can be avoided by using Portal Servers such as LifeRay or SharePoint. In such cases the policy agent is installed in the Portal Server. In turn, the Portal Server acts as a proxy for the target applications and may use technologies such as SAML or auto-form submission. Portal Servers may be customized to dynamically provide access to target systems based on various factors. This includes the user’s role or group, originating IP address, time of day, etc. Portal-based single sign-on (PSSO) serves as the foundation for most vendors who are providing cloud-based WSSO products. When implementing PSSO solutions, direct access to target systems is still permitted. This allows users to bypass the Portal but in so doing, they need to remember their application specific credentials. You can disallow direct access by creating container-specific rules that only allow traffic from the Portal to the application.

Single Sign-On Involving Proxy Servers

Proxy servers are similar to PSSO implementations in that they provide a central point of access. They differ, however, in that they do not provide a graphical user interface. Instead, users are directed to the proxy through various methods (i.e. DNS, load balancers, Portal Servers, etc.). Policy agents are installed in the proxy environment (which may be an appliance) and users are granted or denied access to target resources based on whether they have the appropriate credentials and permission for the target resource.

The following diagram provides a high level overview of centralized single sign-on using Portal or Proxy Servers.

Federation

Federation is designed to enable Single Sign-On and Single Logout between trusted partners across a heterogeneous environment (i.e. different domains). Companies that wish to offer services to their customers or employees enter into a federated agreement with trusted partners who in turn provide the services themselves. Federation enables this partnership by defining a set of open protocols that are used between partners to communicate identity information within a Circle of Trust. Protocols include SAML, Liberty ID-FF, and WS-Federation.

Implementation of federated environments requires coordination between each of its members. Companies have roles to play as some entities act as identity providers (IDP – where users authenticate and credentials are verified) and service providers (SP – where the content and/or service originate). Similar to standard Web Single Sign-On, an unauthenticated user attempting to access content on a SP is redirected to an appropriate IDP where their identity is verified. Once the user has successfully authenticated, the IDP creates an XML document called an assertion in which it asserts certain information about the user. The assertion can contain any information that the IDP wishes to share with the SP, but is typically limited to the context of the authentication. Assertions are presented to SPs but are not taken at face value. The manner in which assertions are validated vary between the type of federation being employed and may range from dereferencing artifacts (which are similar to cookies) or by verifying digital signatures associated with an IDP’s signed assertion.

The interaction between the entities involved in a federated environment (user, SP and IDP) is similar to the Web Single Sign-On environment except that authentication is permitted across different domains.

A major difference between federated and WSSO environments involves the type of information generated by the authenticating entity to vouch for the user and how it is determined that that vouch is valid and had not been altered in any way.

The following table provides a feature comparison between Web SSO and Enterprise SSO.

| Features | WSSO / PSSO / Proxy | ESSO |

| Applications Supported: | Web Only | Web Applications and Fat Clients |

| “Agent” Location: | Target System | User Desktop |

| Technologies: | SAML, Form Submission, Cookies | Form Submission |

| Internal Users? | Yes | Yes (through portal) |

| External Users? | Yes | No |

| Central Authentication? | Yes | No |

| Central Authorization? | Yes | No |

| Central Session Logoff? | Yes | No |

| Global Account Deactivation? | Yes (through password change) | No |

Directory Servers vs Relational Databases

An interesting question was posed on LinkedIn that asked, “If you were the architect of LinkedIn, MySpace, Facebook or other social networking sites and wanted to model the relationships amongst users and had to use LDAP, what would the schema look like?”

You can find the original post and responses here.

After reading the responses from other LinkedIn members, I felt compelled to add my proverbial $.02.

Directory Servers are simply special purpose data repositories. They are great for some applications and not so great for others. You can extend the schema and create a tree structure to model just about any kind of data for any type of application. But just because you “can” do something does not mean that you “should” do it.

The question becomes “Should you used a directory server or should you use a relational database?” For some applications a directory server would be a definite WRONG choice, for others it is clearly the RIGHT one, for yet others, the choice is not so clear. So, how do you decide?

Here are some simply rules of thumb that I have found work for me:

1) How often does your data change?

Keep in mind that directory servers are optimized for reads — this oftentimes comes at the expense of write operations. The reason is that directory servers typically implement extensive indexes that are tied to schema attributes (which by the way are tied to the application fields). So the question becomes, how often do these attributes change? If they do so often, then a directory server may not be the best choice (as you would be constantly rebuilding the indexes). If, however, they are relatively static, then a directory server would be a great choice.

2) What type of data are you trying to model?

If your data can be described in an attribute: value pair (i.e., name:Bill Nelson), then a directory server would be a good choice. If, however, your data is not so discrete, then a directory server should not be used. For instance, uploads to YouTube should NOT be kept in a directory server. User profiles in LinkedIn, however, would be.

3) Can your data be modeled in a hierarchical (tree-like) structure?

Directory servers implement a hierarchical structure for data modeling (similar to a file system layout). A benefit of a directory server is the ability to apply access control at a particular point in the tree and have that apply to all child elements in the tree structure. Additionally, you can start searching at a lower (child element) and increase your search performance times (much like selecting the proper starting point for the Unix “find” command). Relational databases cannot do this. You have to search all entries in the table. If your data lends to a hierarchical structure then a directory server might be a good choice.

I am a big fan on directory servers and have architected/implemented projects that sit 100% on top of a directory, 100% on top of relational databases, and a hybrid of both. Directory servers are extremely fast, flexible, scalable, and are able to handle the type of traffic you see on the Internet very well. Their ability to implement chaining, referrals, web services, and a flexible data modeling structure make them a very nice choice to use as a data repository to many applications, but I would not always lead with a directory server for every application.

So how do you decide which is best? It all comes down to the application, itself, and the way you want to access your data.

A site like LinkedIn might actually be modeled pretty well with a directory server as quite a bit of the content is actually static, lends well to an attribute:value pair, and can easily be modeled in a heirarchical structure. The user profiles for a site like facebook or YouTube could easily be modeled in a directory server, but I would NOT attempt to reference the YouTube or facebook uploads or the “what are you working on now” status with a directory server as it is constantly changing.

If you do decide to use a directory server, here are the general steps you should consider for development (your mileage may vary, but probably not too much):

- Evaluate the data fields that you want to access from your application

- Map the fields to existing directory server schema (extend if necessary).

- Build a heirarchical structure to model your data as appropriate (this is called the directory information tree, or DIT)

- Architect a directory solution based on where your applications reside thorughout the world (do you need one, two, or multiple directories?) and then determine how you want your data to flow through the system (chaining, referrals, replication)

- Implement the appropriate access control for attributes or the DIT in general

- Implement an effective indexing strategy to increase performance

- Test, test, test